This is a guest post. The views expressed here are solely those of the authors and do not represent positions of IEEE Spectrum or the IEEE.

The degree to which large language models (LLMs) might “memorize” some of their training inputs has long been a question, raised by scholars including Google DeepMind’s Nicholas Carlini and the first author of this article (Gary Marcus). Recent empirical work has shown that LLMs are in some instances capable of reproducing, or reproducing with minor changes, substantial chunks of text that appear in their training sets.

For example, a 2023 paper by Milad Nasr and colleagues showed that LLMs can be prompted into dumping private information such as email addresses and phone numbers. Carlini and coauthors recently showed that larger chatbot models (though not smaller ones) sometimes regurgitated large chunks of text verbatim.

Similarly, the recent lawsuit that The New York Times filed against OpenAI showed many examples in which OpenAI software re-created New York Times stories nearly verbatim (words in red are verbatim):

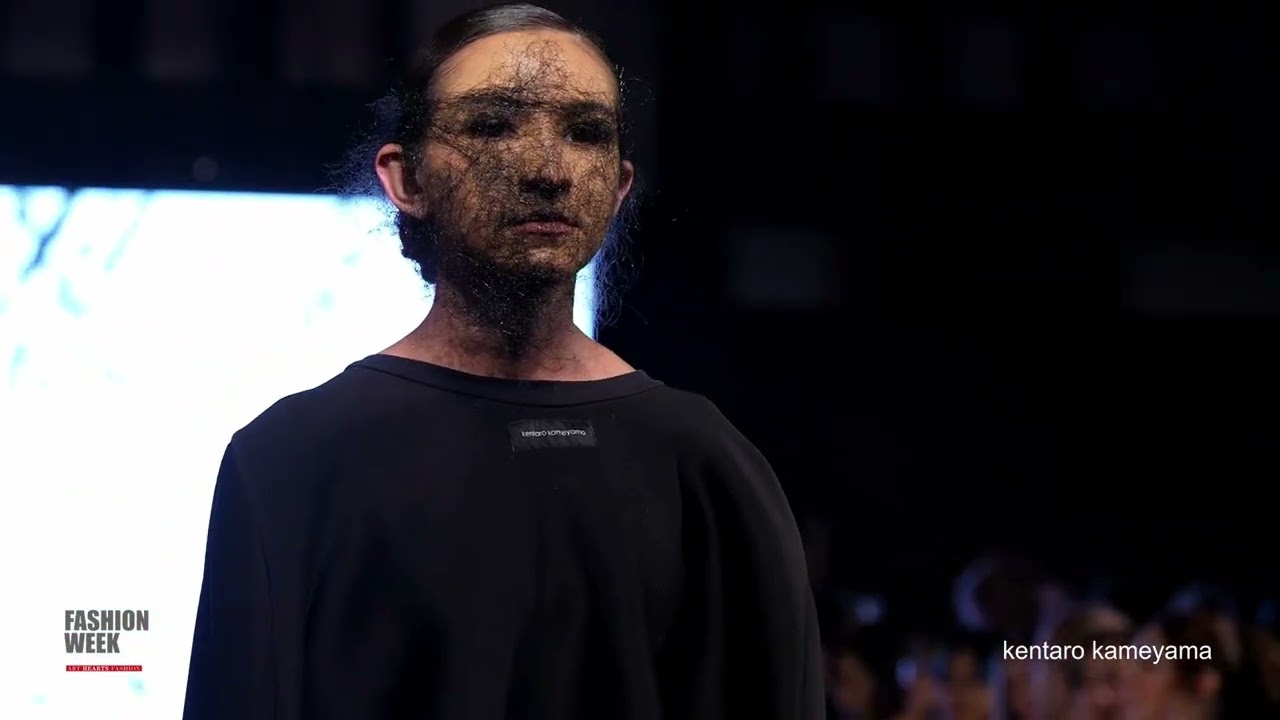

An exhibit from a lawsuit shows seemingly plagiaristic outputs by OpenAI’s GPT-4.New York Times

We will call such near-verbatim outputs “plagiaristic outputs,” because if a human created them we would call them prima facie instances of plagiarism. Aside from a few brief remarks later, we leave it to lawyers to reflect on how such materials might be treated in full legal context.

In the language of mathematics, these examples of near-verbatim reproduction are existence proofs. They do not directly answer the questions of how often such plagiaristic outputs occur or under precisely what circumstances they occur.

These results provide powerful evidence…that at least some generative AI systems may produce plagiaristic outputs, even when not directly asked to do so, potentially exposing users to copyright infringement claims.

Such questions are hard to answer with precision, in part because LLMs are “black boxes”—systems in which we do not fully understand the relation between input (training data) and outputs. What’s more, outputs can vary unpredictably from one moment to the next. The prevalence of plagiaristic responses likely depends heavily on factors such as the size of the model and the exact nature of the training set. Since LLMs are fundamentally black boxes (even to their own makers, whether open-sourced or not), questions about plagiaristic prevalence can probably only be answered experimentally, and perhaps even then only tentatively.

Even though prevalence may vary, the mere existence of plagiaristic outputs raises many important questions, including technical questions (can anything be done to suppress such outputs?), sociological questions (what could happen to journalism as a consequence?), legal questions (would these outputs count as copyright infringement?), and practical questions (when an end user generates something with a LLM, can the user feel comfortable…

Read full article: Generative AI Has a Visual Plagiarism Problem

The post “Generative AI Has a Visual Plagiarism Problem” by Reid Southen was published on 01/06/2024 by spectrum.ieee.org