Although the race to power the massive ambitions of AI companies might seem like it’s all about Nvidia, there is a real competition going in AI accelerator chips. The latest example: At

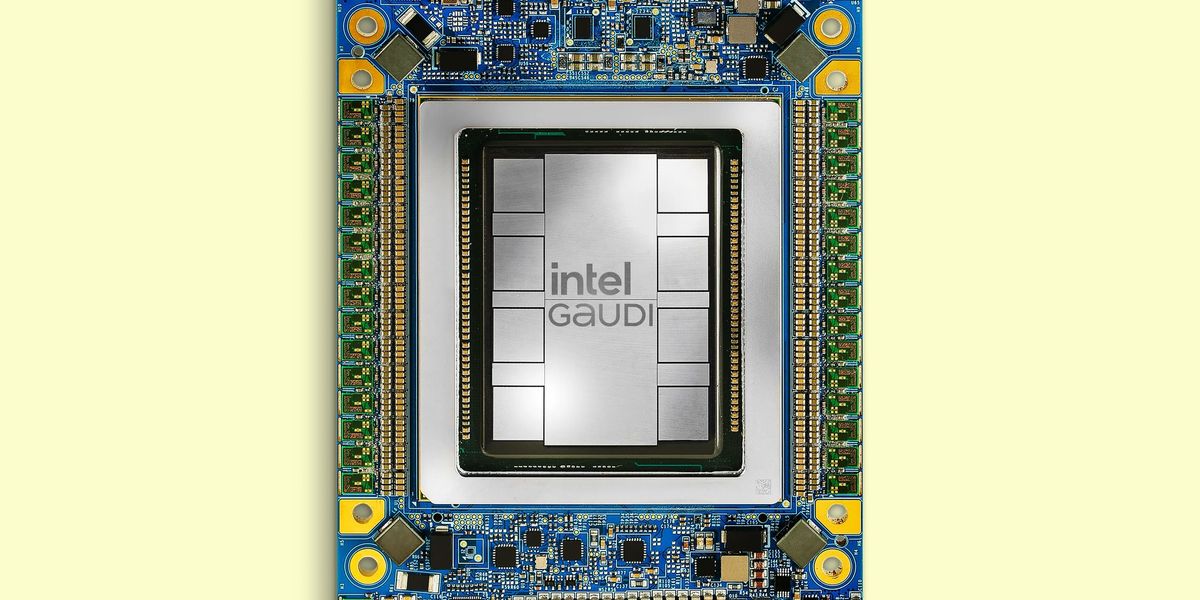

Intel’s Vision 2024 event this week in Phoenix, Ariz., the company gave the first architectural details of its third-generation AI accelerator, Gaudi 3.

With the predecessor chip, the company had touted how close to parity its performance was to Nvidia’s top chip of the time, H100, and claimed a superior ratio of price versus performance. With Gaudi 3, it’s pointing to large-language-model (LLM) performance where it can claim outright superiority. But, looming in the background is Nvidia’s next GPU, the Blackwell B200, expected to arrive later this year.

Gaudi Architecture Evolution

Gaudi 3 doubles down on its predecessor Gaudi 2’s architecture, literally in some cases. Instead of Gaudi 2’s single chip, Gaudi 3 is made up of two identical silicon dies joined by a high-bandwidth connection. Each has a central region of 48 megabytes of cache memory. Surrounding that are the chip’s AI workforce—four engines for matrix multiplication and 32 programmable units called tensor processor cores. All that is surrounded by connections to memory and capped with media processing and network infrastructure at one end.

Intel says that all that combines to produce double the AI compute of Gaudi 2 using 8-bit floating-point infrastructure that has emerged as

key to training transformer models. It also provides a fourfold boost for computations using the BFloat 16 number format.

Gaudi 3 LLM Performance

Intel projects a 40 percent faster training time for the GPT-3 175B large language model versus the H100 and even better results for the 7-billion and 8-billion parameter versions of Llama2.

For inferencing, the contest was much closer, according to Intel, where the new chip delivered 95 to 170 percent of the performance of H100 for two versions of Llama. Though for the Falcon 180B model, Gaudi 3 achieved as much as a fourfold advantage. Unsurprisingly, the advantage was smaller against the Nvidia H200—80 to 110 percent for Llama and 3.8x for Falcon.

Intel claims more dramatic results when measuring power efficiency, where it projects as much as 220 percent H100’s value on Llama and 230 percent on Falcon.

“Our customers are telling us that what they find limiting is getting enough power to the data center,” says Intel’s Habana Labs chief operating officer Eitan Medina.

The energy-efficiency results were best when the LLMs were tasked with delivering a longer output. Medina puts that advantage down to the Gaudi architecture’s large-matrix math engines. These are 512 bits across. Other architectures use many smaller engines to perform the same calculation, but Gaudi’s supersize version “needs almost an order of magnitude less memory bandwidth to feed it,” he says.

Gaudi 3 Versus Blackwell

It’s speculation to compare…

Read full article: Intel’s Gaudi 3 Goes After Nvidia

The post “Intel’s Gaudi 3 Goes After Nvidia” by Samuel K. Moore was published on 04/09/2024 by spectrum.ieee.org