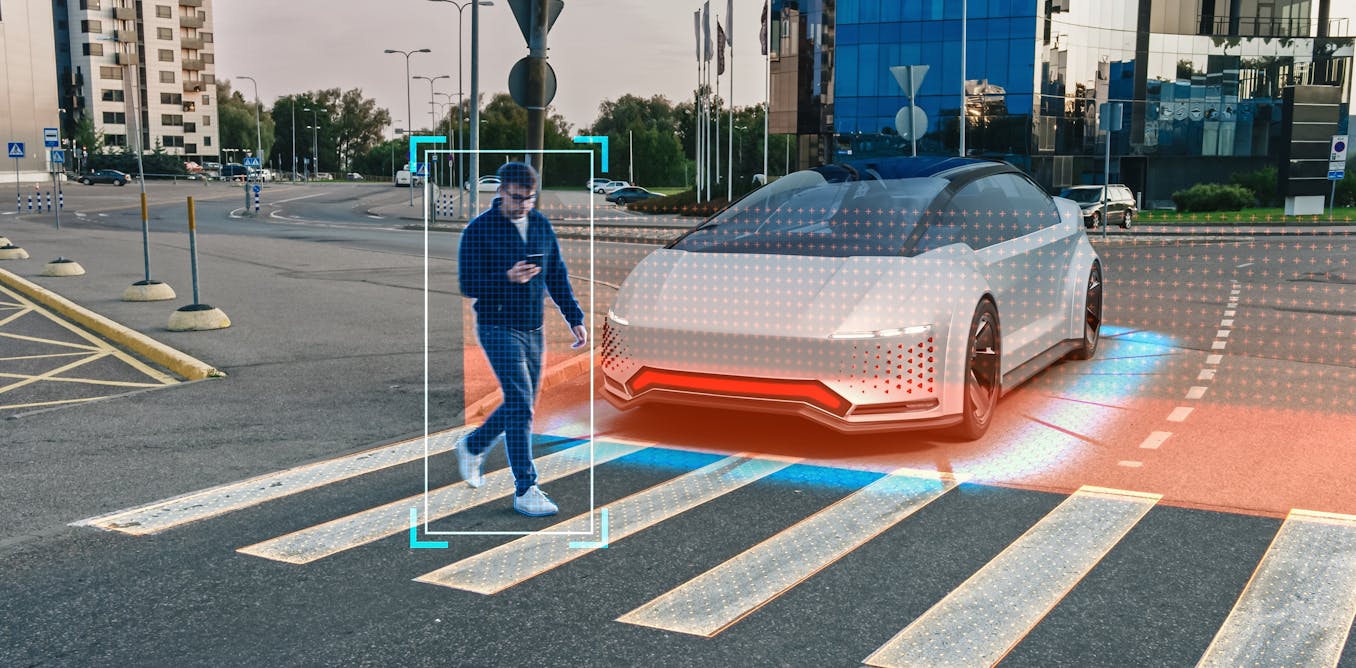

While self-driving vehicles are being deployed in numerous cities globally, persistent controversies continue to challenge their deployment.

Recently, Tesla recalled more than two million cars after the US regulator found problems with its driver assistance system. Tesla did not agree with the US National Highway Traffic Safety Administration’s (NHTSA) analysis, but agreed to add new features.

Tesla’s autopilot system is not fully autonomous, since a human driver has to be present at all times. But autonomous, self-driving cars have already been deployed as driverless taxis, or “robotaxis”, in several US cities, including San Francisco and Phoenix.

Cruise, the robotaxi company owned by General Motors, recently had its operational license in California suspended after just two months of fare-charging operations. The company subsequently halted operations across the US and their CEO soon departed.

This followed several high-profile incidents. In October, a Cruise vehicle dragged a pedestrian to the side of the street after they were hit by another car. As the company’s website explained: “The AV detected a collision, bringing the vehicle to a stop; then attempted to pull over to avoid causing further road safety issues, pulling the individual forward approximately 20 feet.”

But there have also been several reported cases of self-driving cars halting in the road, including in cases where emergency vehicles were nearby.

The halting problem

These incidents highlight a tendency by self-driving cars to stop in the middle of the road as soon as they encounter perceived problems. As human motorists will know, is not always safe to do so and can cause even bigger problems on the road.

This behaviour by the car’s software goes to the heart of a deeper challenge: how can self-driving cars be designed so that their understanding of driving and behaviour on the road is as good as a humans?

In our research we brought together our experiences designing self-driving cars at Nissan, with a new approach that uses video to understand driving behaviour. We used video recordings of self-driving cars to understand the mistakes these vehicles make on the road.

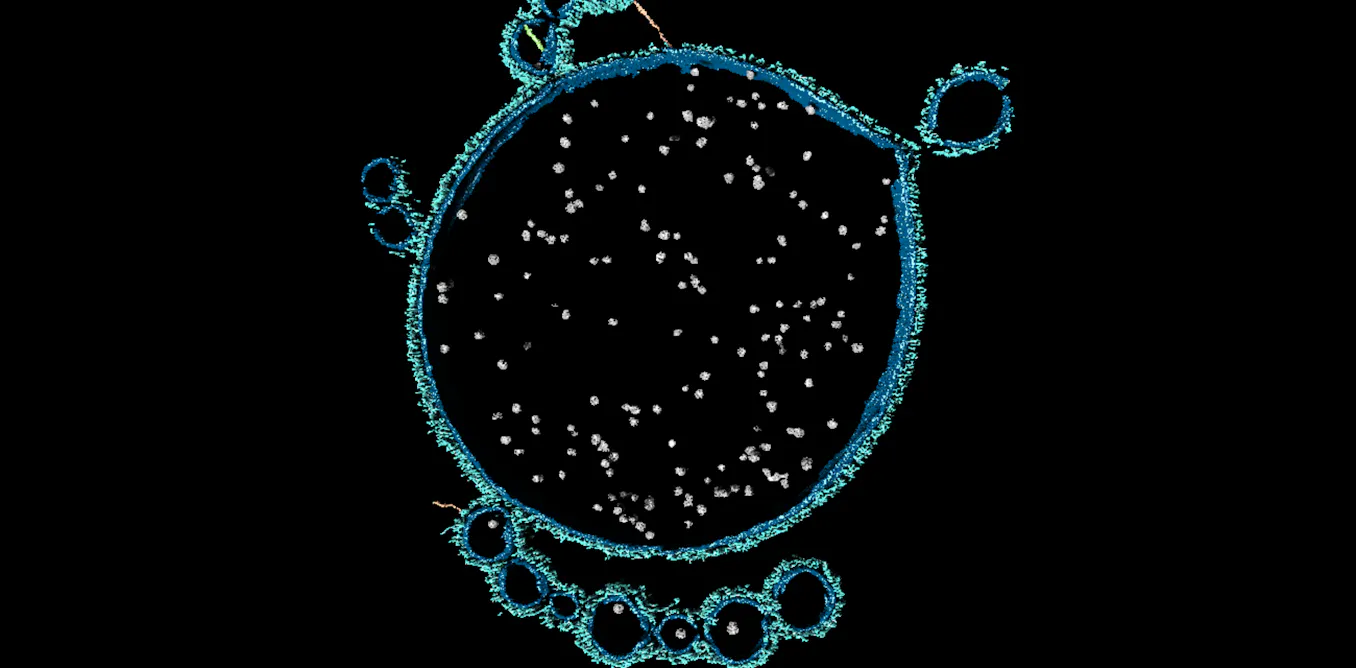

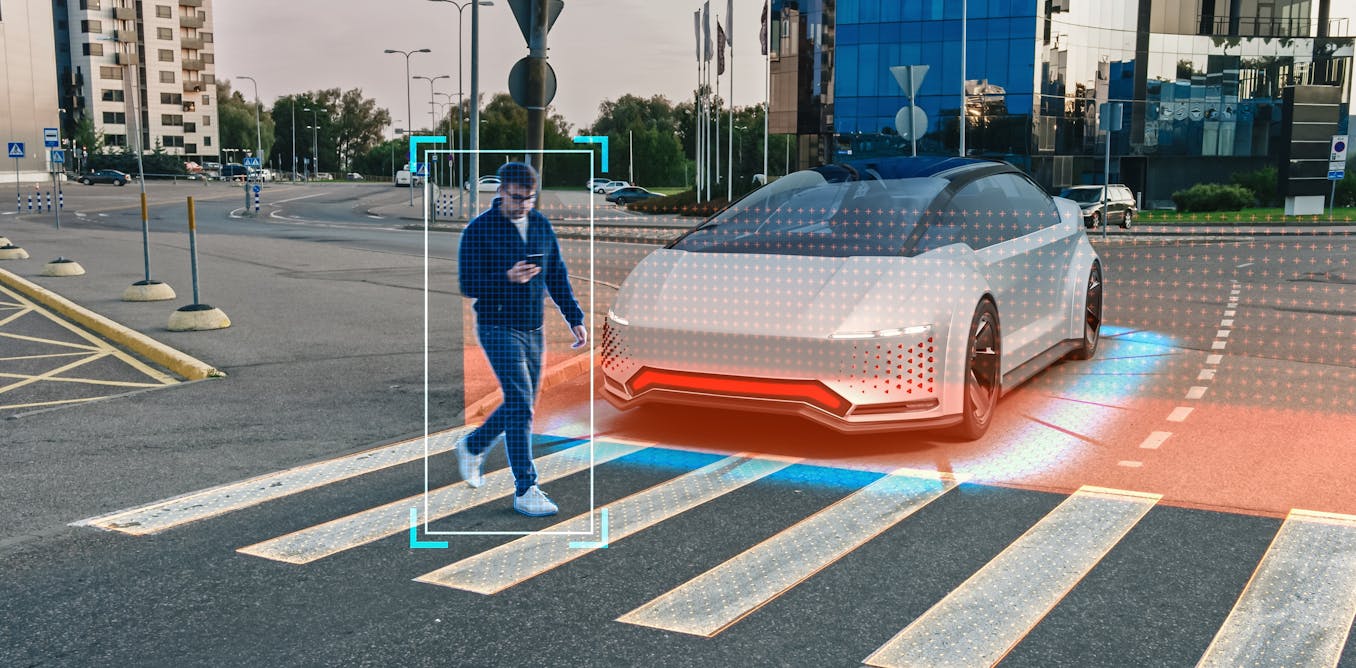

As the incidents mentioned previously show, the perception that a self-driving vehicle has of the road is not necessarily the same as a human’s. A self-driving car constructs a simplified picture of the world from sensor data that ignores an enormous amount of detail from the real – social – world. Autonomous driving systems identify the world through abstract categories, such as cars, bicyclists, pedestrians, trucks and so on.

Iv-olga / Shutterstock

Every human-shaped blob on the video stream is considered a pedestrian, lacking the differences that human drivers may rely on, such as whether a person is marching in a demonstration, or running after a bus. Our human sight is trained from childhood on and we count on others to see things the same way as we perceive them.

Consider the case of the pedestrian that was dragged along by the robotaxi. In the event that you may hit someone, you may not be able to directly see the person who your car has just hit, but you know that they have not just disappeared. Our sense of object persistence would lead us to stop and check if that person needs medical attention.

Such situations are known in the software industry as “edge cases”: a relatively rare case that is not anticipated by developers.

A fundamental assumption underpinning self-driving cars is that the number of unusual situations is finite. But there are good reasons to think that the real world is not at all finite and that there will always be entirely new, never-before-seen edge cases.

Nuanced behaviour

When humans encounter a totally new situation, we use judgement about what to do. We do not just execute the action associated with the “most similar” situation in our memories.

Self-driving cars lack this judgement, and so can either make a guess, or resort to a supposedly neutral or safe solution: stopping. In our video recordings of self-driving cars, their most common behaviour in an unusual situation is to simply halt on the road.

However, stopping in the road might not necessarily be the safest choice, especially if it involves stopping in front of a fire truck. This not only blocks traffic, but it causes a hazard in itself. Our videos contain examples of this “halting” in the most banal of situations – such as a four way stop where a driver is slow in entering the junction, or where a traffic cone has been slightly misplaced.

For human drivers, we can solve such misunderstandings with gestures, the use of the horn, or perhaps even just a glance in a particular direction. Yet driverless cars can do none of these things. Indeed, their continual misunderstandings of human intent mean that basic problems actually arise much more commonly.

While we have serious concerns over the safety of self-driving cars, we are also concerned at how self-driving cars can block and disrupt traffic by their inability to deal with many ordinary traffic situations.

In a recent paper we proposed some potential solutions for designing the motion of self-driving cars so that they can be better understood by other road users. We discussed five basic movement elements: gaps, speed, position, indicating and stopping.

Together, these elements can be combined to make and accept offers with other road users, show urgency, make requests and display preferences.

Whatever the future possibilities of self-driving cars, researchers need to resolve the problems before they are deployed more widely and the same ‘halting’ issues are replicated worldwide.

The post “stopping dead seems to be a default setting when they encounter a problem — it can cause chaos on roads” by Barry Brown, Professor of Human Computer Interaction, Stockholm University was published on 01/03/2024 by theconversation.com