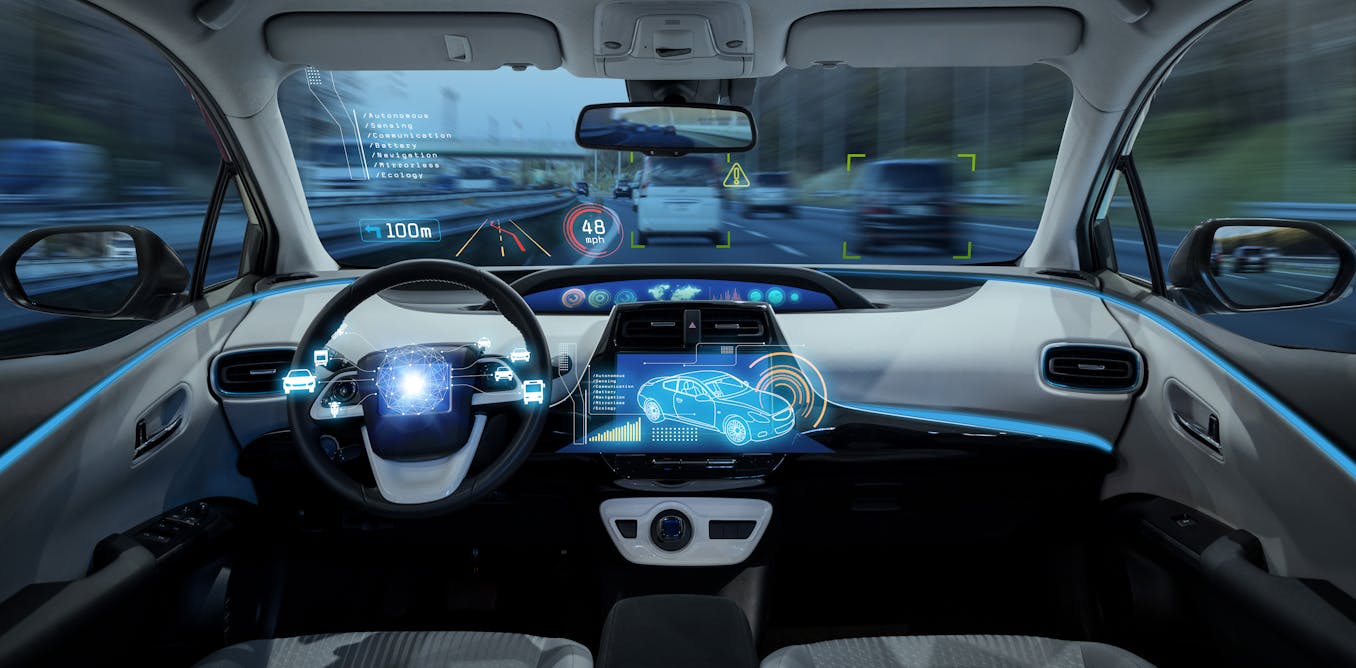

Tesla has recalled 2 million US vehicles over concerns about its autopilot function. Autopilot is meant to help with manoeuvres such as steering and acceleration, but still needs input from the driver. It comes just a few days after a whistle-blowing former Tesla employee cast doubt on the safety of the autopilot function.

A simple internet search reveals several reported cases where the cars have made errors in identifying objects on the road. For instance, a Tesla car mistook an image of a stop sign on a billboard for the real thing and confused the yellow moon with a yellow traffic light.

There have also been numerous recent examples of problems with the “robotaxis” operating in San Francisco. It raises questions about whether the technology that enables vehicles to operate autonomously is ready for the real world.

The driving force behind self-driving vehicles is artificial intelligence (AI), yet current algorithms lack the human-like understanding and reasoning necessary for context when driving. This includes advanced contextual reasoning for interpreting complex visual cues such as obscured objects, and inferring unseen elements in the environment.

Social interaction

Furthermore, these vehicles must be capable of counterfactual reasoning –evaluating hypothetical scenarios and predicting potential outcomes. This is a crucial skill for decision making in dynamic driving situations.

For instance, when an autonomous vehicle (AV) approaches a busy intersection with traffic lights, it must not only obey the current traffic signals but also predict the actions of other road users and consider how those might change under different circumstances.

An example of this scenario is provided by a 2017 accident in which an Uber robotaxi drove through a yellow light in Arizona in 2017 and collided with another car. At the time, there were questions about whether a human driver would have approached the situation differently.

Additionally, social interaction – an area where humans excel and robots falter – is essential. For example, on urban roads with cars parked along both sides, it’s not always clear who has the right of way and we use social skills to negotiate a fair way to proceed.

At roundabouts, it’s common for several cars to arrive at once, making it unclear who has right of way. Again, social skills allow drivers to safely pull onto the roundabout.

To ensure seamless coexistence with AI-driven cars, we urgently need to develop groundbreaking algorithms capable of human-like thinking, social interaction, adaptation to new situations and learning with experience. Such algorithms would enable AI systems to comprehend nuanced human driver behaviour, react to unforeseen road conditions, prioritise decision making that factors in human values and interact socially with other road users.

As we integrate AI-driven vehicles into existing traffic, the kinds of standards we’ve been using to assess and validate the success of autonomous driving systems will become insufficient. There is a pressing need for new standards and mechanisms to assess the capabilities of these driverless cars.

Specific uses

These new protocols should provide more rigorous testing and validation methods, ensuring that AI-driven vehicles meet the highest standards of safety, performance and interoperability (where AI systems from different manufacturers can work “understand” and work together). In doing so, they will establish a foundation for a safer, more harmonious traffic environment where driverless and human-driven cars mix.

It would be a mistake to write off fully self-driving cars, even without the developments which are needed. There is still a place for them, albeit not as ubiquitously as the rapid spread of Tesla vehicles might indicate. We’ll initially need them for specific uses such as autonomous shuttles and highway driving. Alternatively, they could be used in special environments with their own dedicated infrastructure.

For instance, autonomous buses could drive a predefined route with a dedicated lane. Autonomous trucks could also have a separate lane on motorways. However, it’s crucial that uses focus on benefiting the entire community, not just a specific – usually wealthy – group in society.

To ensure autonomous vehicles are well integrated on our roads, we’ll need a diverse groups of experts to enter into a dialogue. These include car manufacturers, policymakers, computer scientists, human and social behaviour scientists and engineers and governmental bodies, among others.

They must come together to address the current challenges. This collaboration should aim to create a robust framework that accounts for the complexity and variability of real-world driving scenarios.

It would involve developing industry-wide safety protocols and standards, shaped by input from all people with a stake in the matter and ensuring these standards can evolve as the technology advances.

The collaborative effort would also need to create open channels for sharing data and insights from real-world testing and simulations. It must also foster public trust through transparency and demonstrate the reliability and safety of AI systems in autonomous vehicles.

The post “Tesla’s recall of 2 million vehicles reminds us how far driverless car AI still has to go” by Prof Saber Fallah, Director of Connected Autonomous Vehicles Lab at the University of Surrey, University of Surrey was published on 12/14/2023 by theconversation.com