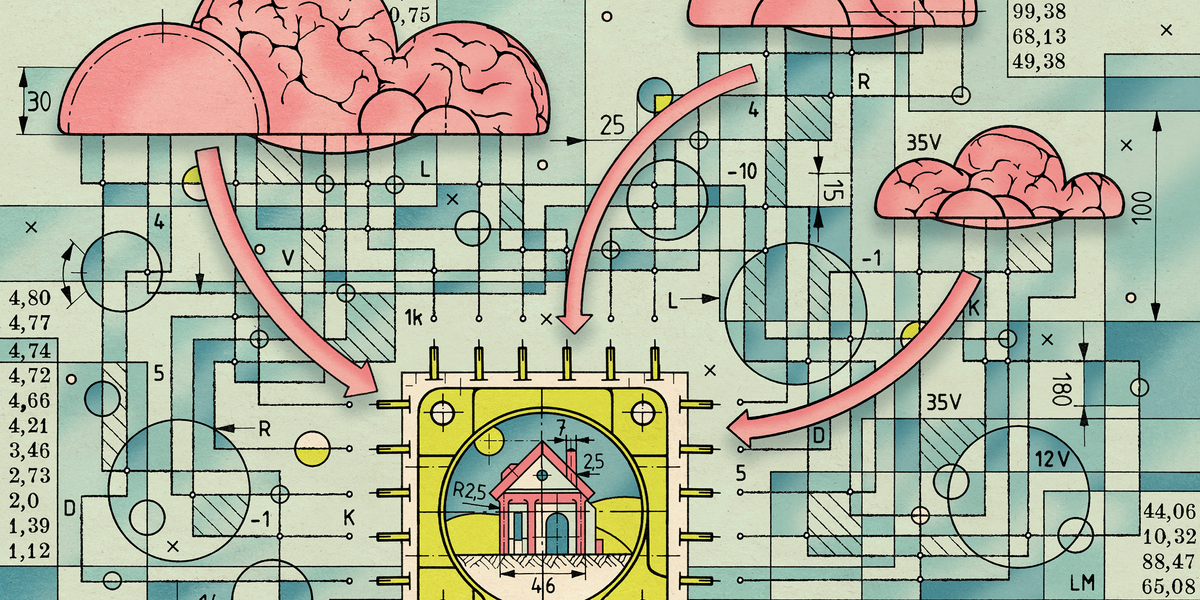

The next great chatbot will run at lighting speed on your laptop PC—no Internet connection required.

That was at least the vision recently laid out by Intel’s CEO, Pat Gelsinger, at the company’s 2023 Intel Innovation summit. Flanked by on-stage demos, Gelsinger announced the coming of “AI PCs” built to accelerate all their increasing range of AI tasks based only on the hardware beneath the user’s fingertips.

Intel’s not alone. Every big name in consumer tech, from Apple to Qualcomm, is racing to optimize its hardware and software to run artificial intelligence at the “edge”—meaning on local hardware, not remote cloud servers. The goal? Personalized, private AI so seamless you might forget it’s “AI” at all.

The promise was AI would soon revolutionize every aspect of our lives, but that dream has frayed at the edges.

“Fifty percent of edge is now seeing AI as a workload,” says Pallavi Mahajan, corporate vice president of Intel’s Network and Edge Group. “Today, most of it is driven by natural language processing and computer vision. But with large language models (LLMs) and generative AI, we’ve just seen the tip of the iceberg.”

With AI, cloud is king—but for how long?

2023 was a banner year for AI in the cloud. Microsoft CEO Satya Nadella raised a pinky to his lips and set the pace with a US $10 billion investment into OpenAI, creator of ChatGPT and DALL-E. Meanwhile, Google has scrambled to deliver its own chatbot, Bard, which launched in March; Amazon announced a $4 billion investment in Anthropic, creator of ChatGPT competitor Claude, in September.

“The very large LLMs are too slow to use for speech-based interaction.”

—Oliver Lemon, Heriot-Watt University, Edinburgh

These moves promised AI would soon revolutionize every aspect of our lives, but that dream has frayed at the edges. The most capable AI models today lean heavily on data centers packed with expensive AI hardware that users must access over a reliable Internet connection. Even so, AI models accessed remotely can of course be slow to respond. AI-generated content—such as a ChatGPT conversation or a DALL-E 2–generated image—can stall out from time to time as overburdened servers struggle to keep up.

Oliver Lemon, professor of computer science at Heriot-Watt University, in Edinburgh, and colead of the National Robotarium, also in Edinburgh, has dealt with the problem firsthand. A 25-year veteran in the field of conversational AI and robotics, Lemon was eager to use the largest language models for robots like Spring, a humanoid assistant designed to guide hospital visitors and patients. Spring seemed likely to benefit from the creative, humanlike conversational abilities of modern LLMs. Instead, it found the limits of the cloud’s reach.

“[ChatGPT-3.5] was too slow to be deployed in a real-world situation. A local, smaller LLM was much better. My impression is that the very large LLMs are too slow to use for speech-based interaction,”…

Read full article: When AI Unplugs, All Bets Are Off

The post “When AI Unplugs, All Bets Are Off” by Matthew S. Smith was published on 12/01/2023 by spectrum.ieee.org