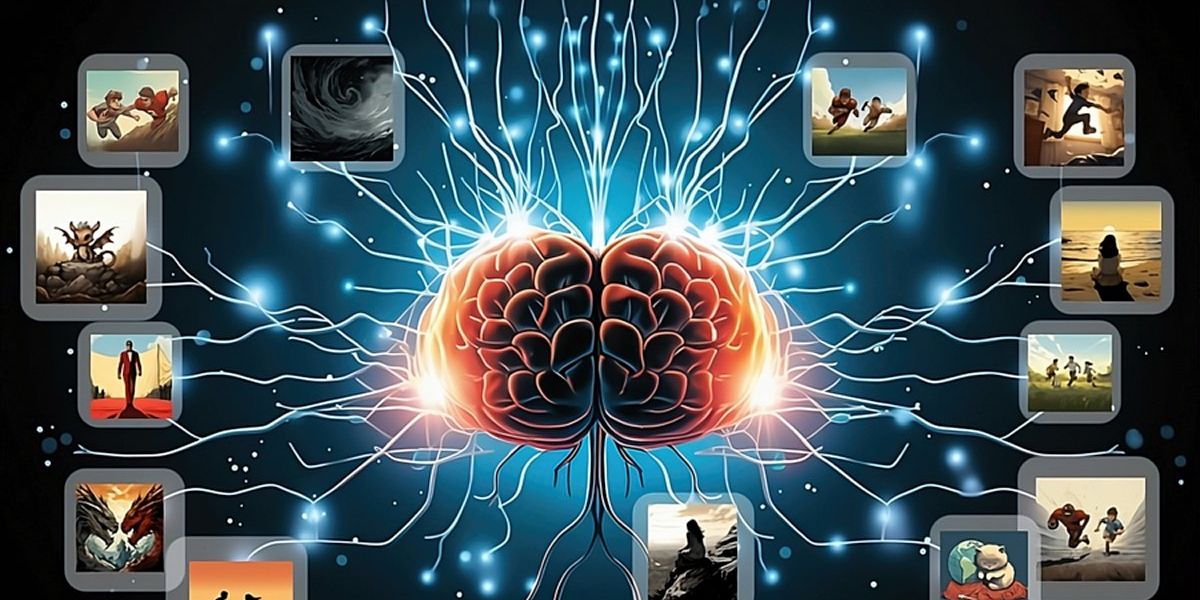

AI systems may perform better at identifying pictures when they are trained on AI-generated art that has been tailored to “teach” concepts behind the images, a new study finds.

Artificial intelligence systems, such as those used to recognize faces, are usually trained on data collected from the real world. In the 1990s, researchers manually captured photographs to build image collections, while in the 2000s, they started trawling the Internet for data.

However, raw data often includes significant gaps and other problems that, if not accounted for, can lead to major blunders. For example, commercial facial-recognition systems were often trained on image databases whose pictures of faces were often light-skinned. This meant they often fared poorly at recognizing dark-skinned people. Curating databases to address these kinds of flaws is often a costly, laborious affair.

“Our study is among the first to demonstrate that using solely synthetic images can potentially lead to better representation learning than using real data.”

—Lijie Fan, MIT

Scientists had previously suggested that AI art generators might be able to help avoid the problems that come with collecting and curating real-world images. Now researchers find image-recognition AI systems trained off synthetic art can actually perform better than ones fed pictures of the real world.

Text-to-image generative AIproducts such as DALL-E 2, Stable Diffusion, and Midjourney now regularly conjure images based on text descriptions. “These models are now capable of generating photorealistic images of exceptionally high quality,” says study co-lead author Lijie Fan, a computer scientist at MIT. “Moreover, these models offer significant control over the content of the generated images. This capability enables the creation of a wide variety of images depicting similar concepts, offering the flexibility to tailor the dataset to suit specific tasks.”

In the new study, researchers developed an AI learning strategy dubbed StableRep. This new technique fed AIs pictures that Stable Diffusion generated when given text captions from image databases such as RedCaps.

The scientists had StableRep produce multiple pictures from identical text prompts. It then had the AI system examine these images as depictions of the same underlying theme. The aim of this strategy was to help the neural network learn more about the concepts behind the images.

In addition, the researchers developed StableRep+. This enhanced strategy not only trained off pictures but also examined text from image captions. In experiments, when trained with 10 million synthetic images, StableRep+ displayed an accuracy of 73.5 percent. In contrast, the AI system CLIP showed an accuracy of 72.9 percent when trained on 50 million real images and captions. In other words, StableRep+ achieved comparable performance with a data source that’s one-fifth as big.

“Our study is among the first to demonstrate that using solely synthetic images can…

Read full article: Synthetic Art Could Help AI Systems Learn

The post “Synthetic Art Could Help AI Systems Learn” by Charles Q. Choi was published on 12/08/2023 by spectrum.ieee.org